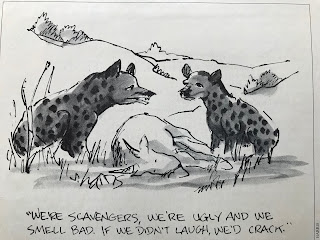

From Punch in the 1980s.

Click to enlarge.

Sunday, December 31, 2017

He was elected borough ale taster

From Shakespeare The World as Stage by Bill Bryson. Page 32.

Now that's an advanced democracy.

Now that's an advanced democracy.

Literate or not, John was a popular and respected fellow. In 1556 he took up the first of many municipal positions when he was elected borough ale taster. The job required him to make sure that measures and prices were correctly observed throughout the town—not only by innkeepers but also by butchers and bakers. Two years later he became a constable—a position that then, as now, argued for some physical strength and courage—and the next year became an “affeeror” (or “affurer”), someone who assessed fines for matters not handled by existing statutes. Then he became successively burgess, chamberlain, and alderman, which last entitled him to be addressed as “Master” rather than simply as “Goodman.” Finally, in 1568, he was placed in the highest elective office in town, high bailiff—mayor in all but name. So William Shakespeare was born into a household of quite a lot of importance locally.

Outcomes not based on luck, surprise, or accident but the power of the individual

From Carnage and Culture by Victor Davis Hanson. Page 368.

If the absence of such liberal institutions hampered the overall Japanese war effort on June 4, 1942, it was the regimentation of the Japanese military culture itself, seen mostly in the sheer absence of individuality, that would prove so critical in such a fast-moving and far-ranging battle like Midway. Close examination of the battle suggests that the Americans’ intrinsic faith in individualism, a product itself of a long tradition of consensual government and free expression, at every turn of the encounter proved decisive. Far better than luck, surprise, or accident, the power of the individual himself explains the Americans’ incredible victory.

La forêt by Michaël Brack

La forêt by Michaël Brack.

Captures the primordial fear of the dark and of the threatening, bewildering forest which blanketed so much of ancient Europe.

Click to enlarge.

Captures the primordial fear of the dark and of the threatening, bewildering forest which blanketed so much of ancient Europe.

Click to enlarge.

Aurelius and Csikszentmihalyi

From Meditations by Marcus Aurelius, Book V.

It was this first piece that initially caught my eye. Some, who are near and dear to my heart, have a profound reluctance to leave the warmth of bed in the morning. They do, but it is difficult for them. In his opening comments of Book V, Aurelius will dismisses the allure.

It was this first piece that initially caught my eye. Some, who are near and dear to my heart, have a profound reluctance to leave the warmth of bed in the morning. They do, but it is difficult for them. In his opening comments of Book V, Aurelius will dismisses the allure.

At dawn, when you have trouble getting out of bed, tell yourself: ‘I have to go to work – as a human being. What do I have to complain of, if I’m going to do what I was born for – the things I was brought into the world to do? Or is this what I was created for? To huddle under the blankets and stay warm?’But the full section, particularly the second half brought to mind Flow: The Psychology of Optimal Experience by Mihaly Csikszentmihalyi.

At dawn, when you have trouble getting out of bed, tell yourself: “I have to go to work — as a human being. What do I have to complain of, if I’m going to do what I was born for — the things I was brought into the world to do? Or is this what I was created for? To huddle under the blankets and stay warm?I don't recall Mihaly Csikszentmihalyi mentioning Aurelius but a little googling seems to indicate that indeed he did. Interesting to find the connection between two fine minds engaging around the same insight - the capacity to lose oneself in concentrated, engaged effort.

—But it’s nicer here. . . .

So you were born to feel “nice”? Instead of doing things and experiencing them? Don’t you see the plants, the birds, the ants and spiders and bees going about their individual tasks, putting the world in order, as best they can? And you’re not willing to do your job as a human being? Why aren’t you running to do what your nature demands?

—But we have to sleep sometime. . . .

Agreed. But nature set a limit on that—as it did on eating and drinking. And you’re over the limit. You’ve had more than enough of that. But not of working. There you’re still below your quota.

You don’t love yourself enough. Or you’d love your nature too, and what it demands of you. People who love what they do wear themselves down doing it, they even forget to wash or eat. Do you have less respect for your own nature than the engraver does for engraving, the dancer for the dance, the miser for money or the social climber for status? When they’re really possessed by what they do, they’d rather stop eating and sleeping than give up practicing their arts.

Saturday, December 30, 2017

Reasonable assumptions are not necessarily correct

From Shakespeare The World as Stage by Bill Bryson. Page 32.

Shakespeare’s father is often said (particularly by those who wish to portray William Shakespeare as too deprived of stimulus and education to have written the plays attributed to him) to have been illiterate. Illiteracy was the usual condition in sixteenth-century England, to be sure. According to one estimate at least 70 percent of men and 90 percent of women of the period couldn’t even sign their names. But as one moved up the social scale, literacy rates rose appreciably. Among skilled craftsmen—a category that included John Shakespeare—some 60 percent could read, a clearly respectable proportion.

The conclusion of illiteracy with regard to Shakespeare’s father is based on the knowledge that he signed his surviving papers with a mark. But lots of Elizabethans, particularly those who liked to think themselves busy men, did likewise even when they could read, rather as busy executives might today scribble their initials in the margins of memos. As Samuel Schoenbaum points out, Adrian Quiney, a Stratford contemporary of the Shakespeares, signed all his known Stratford documents with a cross and would certainly be considered illiterate except that we also happen to have an eloquent letter in his own hand written to William Shakespeare in 1598.

Lines Composed a Few Miles above Tintern Abbey by William Wordsworth

Lines Composed a Few Miles above Tintern Abbey, On Revisiting the Banks of the Wye during a Tour. July 13, 1798

by William Wordsworth

Five years have past; five summers, with the length

Of five long winters! and again I hear

These waters, rolling from their mountain-springs

With a soft inland murmur.—Once again

Do I behold these steep and lofty cliffs,

That on a wild secluded scene impress

Thoughts of more deep seclusion; and connect

The landscape with the quiet of the sky.

The day is come when I again repose

Here, under this dark sycamore, and view

These plots of cottage-ground, these orchard-tufts,

Which at this season, with their unripe fruits,

Are clad in one green hue, and lose themselves

'Mid groves and copses. Once again I see

These hedge-rows, hardly hedge-rows, little lines

Of sportive wood run wild: these pastoral farms,

Green to the very door; and wreaths of smoke

Sent up, in silence, from among the trees!

With some uncertain notice, as might seem

Of vagrant dwellers in the houseless woods,

Or of some Hermit's cave, where by his fire

The Hermit sits alone.

These beauteous forms,

Through a long absence, have not been to me

As is a landscape to a blind man's eye:

But oft, in lonely rooms, and 'mid the din

Of towns and cities, I have owed to them,

In hours of weariness, sensations sweet,

Felt in the blood, and felt along the heart;

And passing even into my purer mind

With tranquil restoration:—feelings too

Of unremembered pleasure: such, perhaps,

As have no slight or trivial influence

On that best portion of a good man's life,

His little, nameless, unremembered, acts

Of kindness and of love. Nor less, I trust,

To them I may have owed another gift,

Of aspect more sublime; that blessed mood,

In which the burthen of the mystery,

In which the heavy and the weary weight

Of all this unintelligible world,

Is lightened:—that serene and blessed mood,

In which the affections gently lead us on,—

Until, the breath of this corporeal frame

And even the motion of our human blood

Almost suspended, we are laid asleep

In body, and become a living soul:

While with an eye made quiet by the power

Of harmony, and the deep power of joy,

We see into the life of things.

If this

Be but a vain belief, yet, oh! how oft—

In darkness and amid the many shapes

Of joyless daylight; when the fretful stir

Unprofitable, and the fever of the world,

Have hung upon the beatings of my heart—

How oft, in spirit, have I turned to thee,

O sylvan Wye! thou wanderer thro' the woods,

How often has my spirit turned to thee!

And now, with gleams of half-extinguished thought,

With many recognitions dim and faint,

And somewhat of a sad perplexity,

The picture of the mind revives again:

While here I stand, not only with the sense

Of present pleasure, but with pleasing thoughts

That in this moment there is life and food

For future years. And so I dare to hope,

Though changed, no doubt, from what I was when first

I came among these hills; when like a roe

I bounded o'er the mountains, by the sides

Of the deep rivers, and the lonely streams,

Wherever nature led: more like a man

Flying from something that he dreads, than one

Who sought the thing he loved. For nature then

(The coarser pleasures of my boyish days

And their glad animal movements all gone by)

To me was all in all.—I cannot paint

What then I was. The sounding cataract

Haunted me like a passion: the tall rock,

The mountain, and the deep and gloomy wood,

Their colours and their forms, were then to me

An appetite; a feeling and a love,

That had no need of a remoter charm,

By thought supplied, not any interest

Unborrowed from the eye.—That time is past,

And all its aching joys are now no more,

And all its dizzy raptures. Not for this

Faint I, nor mourn nor murmur; other gifts

Have followed; for such loss, I would believe,

Abundant recompense. For I have learned

To look on nature, not as in the hour

Of thoughtless youth; but hearing oftentimes

The still sad music of humanity,

Nor harsh nor grating, though of ample power

To chasten and subdue.—And I have felt

A presence that disturbs me with the joy

Of elevated thoughts; a sense sublime

Of something far more deeply interfused,

Whose dwelling is the light of setting suns,

And the round ocean and the living air,

And the blue sky, and in the mind of man:

A motion and a spirit, that impels

All thinking things, all objects of all thought,

And rolls through all things. Therefore am I still

A lover of the meadows and the woods

And mountains; and of all that we behold

From this green earth; of all the mighty world

Of eye, and ear,—both what they half create,

And what perceive; well pleased to recognise

In nature and the language of the sense

The anchor of my purest thoughts, the nurse,

The guide, the guardian of my heart, and soul

Of all my moral being.

Nor perchance,

If I were not thus taught, should I the more

Suffer my genial spirits to decay:

For thou art with me here upon the banks

Of this fair river; thou my dearest Friend,

My dear, dear Friend; and in thy voice I catch

The language of my former heart, and read

My former pleasures in the shooting lights

Of thy wild eyes. Oh! yet a little while

May I behold in thee what I was once,

My dear, dear Sister! and this prayer I make,

Knowing that Nature never did betray

The heart that loved her; 'tis her privilege,

Through all the years of this our life, to lead

From joy to joy: for she can so inform

The mind that is within us, so impress

With quietness and beauty, and so feed

With lofty thoughts, that neither evil tongues,

Rash judgments, nor the sneers of selfish men,

Nor greetings where no kindness is, nor all

The dreary intercourse of daily life,

Shall e'er prevail against us, or disturb

Our cheerful faith, that all which we behold

Is full of blessings. Therefore let the moon

Shine on thee in thy solitary walk;

And let the misty mountain-winds be free

To blow against thee: and, in after years,

When these wild ecstasies shall be matured

Into a sober pleasure; when thy mind

Shall be a mansion for all lovely forms,

Thy memory be as a dwelling-place

For all sweet sounds and harmonies; oh! then,

If solitude, or fear, or pain, or grief,

Should be thy portion, with what healing thoughts

Of tender joy wilt thou remember me,

And these my exhortations! Nor, perchance—

If I should be where I no more can hear

Thy voice, nor catch from thy wild eyes these gleams

Of past existence—wilt thou then forget

That on the banks of this delightful stream

We stood together; and that I, so long

A worshipper of Nature, hither came

Unwearied in that service: rather say

With warmer love—oh! with far deeper zeal

Of holier love. Nor wilt thou then forget,

That after many wanderings, many years

Of absence, these steep woods and lofty cliffs,

And this green pastoral landscape, were to me

More dear, both for themselves and for thy sake!

Leaders who were interested solely in Western methods, not Western values

From Carnage and Culture by Victor Davis Hanson. Page 364.

Japanese mobility and ruse were reflected not just in Admiral Yamamoto’s famous dictum about the relative industrial capacity of the two belligerents—that he could raise hell in the Pacific for six months but could promise nothing after that. Rather, almost all serious strategists in the Japanese military also acknowledged their discomfort with a quite novel situation of all-out warfare with the Americans and British that would require continual head-on confrontations with the Anglo-American fleet. In 1941 no one in the Japanese high command seemed aware that a surprise attack on the Americans would in Western eyes lead to total war, in which the United States would either destroy its adversary or face annihilation in the attempt. But, then, it was a historic error of non-Westerners, beginning with Xerxes’ invasion of Greece, to assume that democracies were somehow weak and timid. Although slow to anger, Western constitutional governments usually preferred wars of annihilation—wiping the Melians off the map of the Aegean, sowing the ground of Carthage with salt, turning Ireland into a near wasteland, wasting Jerusalem before reoccupying it, driving an entire culture of Native Americans onto reservations, atomizing Japanese cities—and were far more deadly adversaries than military dynasts and autocrats. Despite occasional brilliant adaptation of trickery and surprise, and the clear record of success in “the indirect approach” to war—Epaminondas’s great raid into Messenia (369 B.C.) and Sherman’s March to the Sea (1864) are notable examples—Western militaries continued to believe that the most economic way of waging war was to find the enemy, collect sufficient forces to overwhelm him, and then advance directly and openly to annihilate him on the battlefield—all part of a cultural tradition to end hostilities quickly, decisively, and utterly. To read of American naval operations in World War II is to catalog a series of continual efforts to advance westward toward Japan, discover and devastate the Japanese fleet, and physically wrest away all territory belonging to the Japanese government until reaching the homeland itself. The American sailors at Midway were also the first wave of an enormous draft that would mobilize more than 12 million citizens into the armed forces. In the manner of the Romans after Cannae or the democracies in World War I, American political representatives had voted for war with Japan. Polls revealed near unanimous public approval for a ghastly conflict of annihilation against the perpetrators of Pearl Harbor. The United States would also continue to hold elections throughout the conflict as the elected government crafted one of the most radical industrial and cultural revolutions in the history of the Republic in turning the country into a huge arms-producing camp.

The Japanese, in contrast, had only sporadically adopted nineteenth-century European ideas of constitutional government and civic militarism—and both had been discredited by military regimes of the 1930s. Japanese military thinkers believed that a far superior method of fielding large and spirited armies—and one more in line with their own cultural traditions—lay in inculcating the entire population with a fanatical devotion to the emperor and a shared belief in the inevitable rule of the Japanese people. A few wise and all-knowing military officers alone could appreciate the Japanese warrior spirit, and most of them saw little need for the public to debate the wisdom of attacking the largest industrial power in the world:

What Westerners did not realize was that underneath the veneer of modernity and westernization, Japan was still Oriental and that her plunge from feudalism to imperialism had come so precipitously that her leaders, who were interested solely in Western methods, not Western values, had neither the time nor inclination to develop liberalism and humanitarianism. (J. Toland, The Rising Sun, vol. 1, 74)

Postmodernism is detrimental to mental well-being

The findings from this research comport well with my priors so I have to reflexively treat it with skepticism. That said, it is a large population sample of 18,000 and seems rigorously designed. From Is envy harmful to a Society's psychological health and wellbeing? A longitudinal study of 18,000 adults by Redzo Mujcic.

The key findings are:

Nearly 100 years ago, the philosopher and mathematician Bertrand Russell warned of the social dangers of widespread envy. One view of modern society is that it is systematically developing a set of institutions -- such as social media and new forms of advertising -- that make people feel inadequate and envious of others. If so, how might that be influencing the psychological health of our citizens? This paper reports the first large-scale longitudinal research into envy and its possible repercussions. The paper studies 18,000 randomly selected individuals over the years 2005, 2009, and 2013. Using measures of SF-36 mental health and psychological well-being, four main conclusions emerge. First, the young are especially susceptible. Levels of envy fall as people grow older. This longitudinal finding is consistent with a cross-sectional pattern noted recently by Nicole E. Henniger and Christine R. Harris, and with the theory of socioemotional regulation suggested by scholars such as Laura L. Carstensen. Second, using fixed-effects equations and prospective analysis, the analysis reveals that envy today is a powerful predictor of worse SF-36 mental health and well-being in the future. A change from the lowest to the highest level of envy, for example, is associated with a worsening of SF-36 mental health by approximately half a standard deviation (p < .001). Third, no evidence is found for the idea that envy acts as a useful motivator. Greater envy is associated with slower -- not higher -- growth of psychological well-being in the future. Nor is envy a predictor of later economic success. Fourth, the longitudinal decline of envy leaves unaltered a U-shaped age pattern of well-being from age 20 to age 70. These results are consistent with the idea that society should be concerned about institutions that stimulate large-scale envy.

The key findings are:

Young people are especially susceptible to cultivated envy.All the trappings of postmodernism (cultivation of victimhood, epistemic closure, socialism, white privilege) are all manifestations of rooted envy. The research suggests we ought to be classifying posomodernism as detrimental to mental and economic well-being.

Envy fosters poor mental health outcomes.

Envy is no motivator of success; there is no association between envy and positive economic outcomes.

Institutions which cultivate envy are a long term threat to societal well-being.

Friday, December 29, 2017

Four Probabilities of Pedagogy

From The Iron Laws of Pedagogy by Bryan Caplan. I am not sure that these are actually Iron Laws. More like the default norms of education. Maybe even more aptly, The Four Probabilities of Pedagogy.

First Iron Law: Students learn only a small fraction of what they're taught.

Second Iron Law: Students remember only a small fraction of what they learn.

Third Iron Law: Most of the lessons students remember lack practical applications.

Fourth Iron Law: Even when students remember something with practical applications, they still usually fail to apply what they know... unless you explicitly tell them to do so.

These feet were made for walking

From Shakespeare The World as Stage by Bill Bryson. Page 32.

Stratford was a reasonably consequential town. With a population of roughly two thousand at a time when only three cities in Britain had ten thousand inhabitants or more, it stood about eighty-five miles northwest of London—a four-day walk or two-day horseback ride—on one of the main woolpack routes between the capital and Wales. (Travel for nearly everyone was on foot or by horseback, or not at all. Coaches as a means of public transport were invented in the year of Shakespeare’s birth but weren’t generally used by the masses until the following century.)

She's a keeper

Heh. The original question.

Somebody asks Bowman a question, to which he replies.

And speaking for cerebral guys everywhere, John Sergeant observes:

Imagine the transporter from Star Trek exists. But the way it works is that anyone getting into it dies painlessly and an exact copy, including all consciousness and memories, is made at the destination. Would you ever use it?

— Sam Bowman 🌐 (@s8mb) December 29, 2017

Somebody asks Bowman a question, to which he replies.

Nope, but I've seen/read similar - just been arguing about the issue with my gf and trying to prove that she's in the minority.

— Sam Bowman 🌐 (@s8mb) December 29, 2017

And speaking for cerebral guys everywhere, John Sergeant observes:

Such a discussion means your gf is a keeper. Never let anyone energise her away from you! Happy New Year.

— John Sargeant (@JPSargeant78) December 29, 2017

One of the most remarkable revolutions in the history of arms

From Carnage and Culture by Victor Davis Hanson. Page 359.

In one of the most remarkable revolutions in the history of arms, Japan found herself in more than a quarter century (1870–1904) the near military equal of the best of European powers. Although lacking the population and natural resources of its immediate neighbors Russia and China, Japan had proved that with a topflight Westernized military it could defeat forces far greater in number. Japan is thus the classic refutation of the now popular idea that topography, resources such as iron and coal deposits, or genetic susceptibility to disease and other natural factors largely determine cultural dynamism and military prowess. The Japanese mainland was unchanging—before, during, and after its miraculous century-long military ascendancy—but what was not static was its radical nineteenth-century emulation of elements of the Western tradition completely foreign to its native heritage.

Not only were Japanese admirals and generals dressed and titled like their European counterparts, their ships and guns were nearly identical as well. Unfortunately for their Asian adversaries, the Westernized Japanese military was not a mere passing phase. Japan envisioned Western arms and tactics not as an auxiliary to centuries-long Japanese military doctrines or as a veneer of ostentation, but as a radical, fundamental, and permanent restructuring of Japan’s armed forces that would lead to hegemony in Asia.

Thursday, December 28, 2017

Sorry, your luggage is in Rome

From Punch in the 1980s.

Reminds me of Heathrow in the 1970s and 80s when there were many go-slows, work stoppages and other interruptions to travel.

Click to enlarge.

Reminds me of Heathrow in the 1970s and 80s when there were many go-slows, work stoppages and other interruptions to travel.

Click to enlarge.

Most varieties of light meat were helpfully categorized as fish

From Shakespeare The World as Stage by Bill Bryson. Page 31.

Food was similarly regulated, with restrictions placed on how many courses one might eat, depending on status. A cardinal was permitted nine dishes at a meal while those earning less than £40 a year (which is to say most people) were allowed only two courses, plus soup. Happily, since Henry VIII’s break with Rome, eating meat on Friday was no longer a hanging offense, though anyone caught eating meat during Lent could still be sent to prison for three months. Church authorities were permitted to sell exemptions to the Lenten rule and made a lot of money doing so. It’s a surprise that there was much demand, for in fact most varieties of light meat, including veal, chicken, and all other poultry, were helpfully categorized as fish.

Good intentions preclude course corrections

Still probably too soon to tell, but at some point you have to make a call, do we continue with a reform that was well intentioned but is failing or do we pull the plug because of negative consequences. From Suspension Reform Is Tormenting Schools by Max Eden.

The weakness of the evidence made poor grounds for dramatic policy change, but that was what was done.

To what effect? Eden reports:

Pull the plug now is the first instinct. But there are counter-arguments - "we have to improve implementation," "we need to give it a longer trial," "we need to improve school support for the initiative," etc. Yes, that is possible. It seems like the answer should be to pull the plug, reverse course, and reconsider what alternative strategies might work to reduce the costs of student discipline. But it seems likely that that is not what will happen. It is very hard to kill an ineffective program which has good intentions.

Under an Obama-era directive and the threat of federal civil rights investigation, thousands of American schools changed their discipline policies in an attempt to reduce out-of-school suspensions. Last year, education-policy researchers Matthew Steinberg and Joanna Lacoe reviewed the arguments for and against discipline reform in Education Next, concluding that little was known about the effects of the recent changes. But this year, the picture is becoming clearer: discipline reform has caused a school-climate catastrophe.No doubt the reform was well-intentioned. The evidence that punishment of non-conforming behavior might not be effective was scanty but the evidence for abandoning punishments as a policy was also weak. Weak, but plausible.

The weakness of the evidence made poor grounds for dramatic policy change, but that was what was done.

To what effect? Eden reports:

Philadelphia is the latest city to fall into crisis, according to a new study conducted by Lacoe and Steinberg. The Philly school district serves 134,000 students, about 70 percent of whom are black or Latino. In the 2012–13 school year, Philadelphia banned suspensions for non-violent classroom misbehavior. Steinberg and Lacoe estimate that, compared with other districts, discipline reform reduced academic achievement by 3 percent in math and nearly 7 percent in reading by 2016. The authors do report that, among students with previous suspensions, achievement increased by 0.2 percent. But this only demonstrates that well-behaved students bore the brunt of the academic damage.Tiny improvements in outcomes for that tiny percentage of the student body who had bad behavior issues. But that small improvement for a small population came at the expense of large costs in learning effectiveness to the large majority of students.

Lacoe and Steinberg report another small improvement among previously suspended students: their attendance rose by 1.43 days a year. But again, this development was more than offset by the negative trend in the broader student body. Truancy in Philadelphia schools had been declining steadily before the reform, but then rose at an astonishing rate afterward, from about 25 percent to over 40 percent.

Perhaps students were staying at home because they were scared to be at school. Suspensions for non-violent classroom misbehavior dropped after the ban, but suspensions for “serious incidents” rose substantially. The effort to reduce the racial suspension gap actually increased it; African-American kids spent an extra .15 days out of school.

Pull the plug now is the first instinct. But there are counter-arguments - "we have to improve implementation," "we need to give it a longer trial," "we need to improve school support for the initiative," etc. Yes, that is possible. It seems like the answer should be to pull the plug, reverse course, and reconsider what alternative strategies might work to reduce the costs of student discipline. But it seems likely that that is not what will happen. It is very hard to kill an ineffective program which has good intentions.

The Americans soon turned out a sophisticated B-24 heavy bomber of some 100,000 parts every sixty-three minutes

From Carnage and Culture by Victor Davis Hanson. Page 340.

On the Battle of Midway.

On the Battle of Midway.

In strictly military terms the number of dead at Midway was not large—fewer than 4,000 in the two fleets. The losses were a mere fraction of what the Romans suffered at Cannae, or the Persians at Gaugamela, and much less costly than the bloodbaths of the great sea battles of Salamis, Lepanto, Trafalgar, and Jutland—or the Japanese slaughter to come at Leyte Gulf. But the sinking of the carriers represented an irreplaceable investment of millions of days of precious skilled labor, and even scarcer capital—and the only capability of the Japanese to destroy both the American fleet and Pacific bases. More than one hundred of the best carrier pilots perished in one day, equal to the entire graduating class of naval aviators that Japan could turn out in a single year. Never had the Japanese military lost so dramatically when technology, matériel, experience, and manpower were so decidedly in its favor. Back in Washington, D.C., Admiral Ernest J. King, chief of all U.S. naval operations, concluded of the action of June 4 that the battle of Midway had been the first decisive defeat of the Japanese navy in 350 years and had restored the balance of naval power in the Pacific.

Again, the carriers themselves were irreplaceable. During the entire course of World War II the Japanese launched only seven more of such enormous ships; the Americans in contrast would commission more than one hundred fleet, light, and escort carriers by war’s end. The Americans would also build or repair twenty-four battleships—despite losing nearly the entire fleet of the latter at Pearl Harbor—and a countless number of heavy and light cruisers, destroyers, submarines, and support ships. During the four years of the war the Americans constructed sixteen major warships for every one the Japanese built.

Worse still for the Japanese, the highest monthly production of all models of Japanese navy and army aircraft rarely exceeded 1,000 planes, and by summer 1945 the sum was scarcely half that due to American bombing, the need for factory dispersal, and matériel and manpower shortages. In contrast, the Americans soon turned out a sophisticated B-24 heavy bomber of some 100,000 parts every sixty-three minutes; American aircraft workers, who vastly outnumbered the Japanese, were also four times more productive than their individual enemy counterparts. By August 1945, in less than four years after the war had begun, the United States had produced nearly 300,000 aircraft and 87,620 warships. Even as early as mid-1944, American industry was building entire new fleets every six months, replete with naval aircraft comparable in size to the entire American force at Midway. After 1943, both American ships and airplanes—sixteen new Essex-class carriers outfitted with Helldiver dive-bombers, Corsair and Hellcat fighters, and Avenger torpedo bombers— were qualitatively and quantitatively superior to anything in the Japanese military. The modern Iowa-class battleships that appeared in the latter half of the war were better in speed, armament, range, and defensive protection than anything commissioned in the Japanese navy and were far more effective warships than even the monstrous Yamato and Mushasi. Within a few months after Midway, not only had the United States naval and air armies made up all the losses from Midway, but its entire armed forces were growing at geometric rates, while the Japanese navy actually began to shrink as outmoded and often bombed-out factories could not even replace obsolete ships and planes lost to American guns, let alone manufacture additional ones. This was the Arsenal of Venice and Cannae’s aftermath all over again.

Haldane Principle - Ovem lupo commitere

The Haldane Principle from Wikipedia.

A nice sentiment, but constantly undermined in reality by the Iron Law of Oligarchy by Robert Michels.

We have adages that cover this inclination to let the beneficiaries determine the costs to be imposed on others. In the US it is "Don't set the fox to guard the henhouse" but the Romans had

In British research policy, the Haldane principle is the idea that decisions about what to spend research funds on should be made by researchers rather than politicians. It is named after Richard Burdon Haldane, who in 1904 and from 1909 to 1918 chaired committees and commissions which recommended this policy.Hmmm. In the common parlance.

Beneficiaries of free stuff should be the only ones to decide how that free stuff is distributed.Put that way, it is patently absurd. I suspect what Haldane was getting at is that research funds should be distributed based on evidence-based decision-making.

A nice sentiment, but constantly undermined in reality by the Iron Law of Oligarchy by Robert Michels.

Michels' theory states that all complex organizations, regardless of how democratic they are when started, eventually develop into oligarchies. Michels observed that since no sufficiently large and complex organization can function purely as a direct democracy, power within an organization will always get delegated to individuals within that group, elected or otherwise.This is related to The Logic of Collective Action: Public Goods and the Theory of Groups by Mancur Olson. From Wikipedia.

[snip]

According to Michels all organizations eventually come to be run by a "leadership class", who often function as paid administrators, executives, spokespersons, political strategists, organizers, etc. for the organization. Far from being "servants of the masses", Michels argues this "leadership class," rather than the organization's membership, will inevitably grow to dominate the organization's power structures. By controlling who has access to information, those in power can centralize their power successfully, often with little accountability, due to the apathy, indifference and non-participation most rank-and-file members have in relation to their organization's decision-making processes. Michels argues that democratic attempts to hold leadership positions accountable are prone to fail, since with power comes the ability to reward loyalty, the ability to control information about the organization, and the ability to control what procedures the organization follows when making decisions. All of these mechanisms can be used to strongly influence the outcome of any decisions made 'democratically' by members.

It develops a theory of political science and economics of concentrated benefits versus diffuse costs. Its central argument is that concentrated minor interests will be overrepresented and diffuse majority interests trumped, due to a free-rider problem that is stronger when a group becomes larger.The dynamic of concentrated benefits and dispersed costs is also what underpins Eric Hoffer's observation in The Temper of Our Time:

What starts out here as a mass movement ends up as a racket, a cult, or a corporation.Of course, we don't need to be so high falutin.

We have adages that cover this inclination to let the beneficiaries determine the costs to be imposed on others. In the US it is "Don't set the fox to guard the henhouse" but the Romans had

Ovem lupo commitere

- To set a wolf to guard sheep

Wednesday, December 27, 2017

For obscure reasons Puritans resented the law and were often fined for flouting it.

From Shakespeare The World as Stage by Bill Bryson. Page 30.

Sumptuary laws, as they were known, laid down precisely, if preposterously, who could wear what. A person with an income of £20 a year was permitted to don a satin doublet but not a satin gown, while someone worth £100 a year could wear all the satin he wished, but could have velvet only in his doublets, but not in any outerwear, and then only so long as the velvet was not crimson or blue, colors reserved for knights of the Garter and their superiors. Silk netherstockings, meanwhile, were restricted to knights and their eldest sons, and to certain—but not all—envoys and royal attendants. Restrictions existed, too, on the amount of fabric one could use for a particular article of apparel and whether it might be worn pleated or straight and so on through lists of variables almost beyond counting.

The laws were enacted partly for the good of the national accounts, for the restrictions nearly always were directed at imported fabrics. For much the same reasons, there was for a time a Statute of Caps, aimed at helping domestic cap makers through a spell of depression, which required people to wear caps instead of hats. For obscure reasons Puritans resented the law and were often fined for flouting it. Most of the other sumptuary laws weren’t actually much enforced, it would seem. The records show almost no prosecutions. Nonetheless they remained on the books until 1604.

For they themselves enjoy the prize of victory

From Carnage and Culture by Victor Davis Hanson. Page 334.

Now where men are not their own masters and independent, but are ruled by despots, they are not really militarily capable, but only appear to be warlike. . . . For men’s souls are enslaved and they refuse to run risks readily and recklessly to increase the power of somebody else. But independent people, taking risks on their own behalf and not on behalf of others, are willing and eager to go into danger, for they themselves enjoy the prize of victory. So institutions contribute a great deal to military valor.

—HIPPOCRATES, Airs, Waters, Places (16, 23)

Running the numbers without taking into account statistics

From The surprising thing Google learned about its employees — and what it means for today’s students by Valerie Strauss.

I have mentioned some number of times in the past, my speculation that at least some portion of cognitive pollution entering the public discussion is perhaps not due to ideological bias but due to innumeracy on the part or journalists and editors. It is, of course not either/or - bias and innumeracy might be operating in conjunction.

In the Strauss article, drawing on a recent book by Cathy N. Davidson, the proposition is advanced that:

But Davidson appears intent on buttressing an otherwise anodyne assertion by twisting the actual implications of the data. The conclusion she wants to arrive at is that the humanities are important (I concur) and they are equally valuable (which is not what the data indicates.)

What comes across, though, is a set of fairly fundamental cognitive errors. It centers on this finding:

It seems that the Washington Post commenters are alert to the errors. It makes you wonder, stuffed to the gills with STEM talent as Google is, how they allowed such a set of mistakes to color their own internal research.

They warrant investigation and research. Progress is not facilitated by erroneous interpretation (whether owing to innumeracy or ignorance or bias or ideology). In a STEM environment dominated by people with technical skills, it is unsurprising that those with corresponding skills in team management or communication might shine above and beyond their counterparts with just the equally superior technical skills.

But if, from that, you conclude that everyone can be equally successful in tech regardless of their degree and that is what you communicate to young adults, then you are misleading them.

Yes, there are complex issues which need high-achieving individuals with a broad array of capabilities to tackle them. But if you want to achieve tech industry levels of compensation, find a way to be motivated to not only pass, but excel in STEM fields. And anticipate that if you have a residual competency or avocation in people management or communication, that will bode well for your career success.

But don't tell kids who want to major in history that they can anticipate achieving tech industry levels of compensation with just a history degree. That is not where the probabilities lie.

I have mentioned some number of times in the past, my speculation that at least some portion of cognitive pollution entering the public discussion is perhaps not due to ideological bias but due to innumeracy on the part or journalists and editors. It is, of course not either/or - bias and innumeracy might be operating in conjunction.

In the Strauss article, drawing on a recent book by Cathy N. Davidson, the proposition is advanced that:

All across America, students are anxiously finishing their “What I Want To Be …” college application essays, advised to focus on STEM (Science, Technology, Engineering, and Mathematics) by pundits and parents who insist that’s the only way to become workforce ready. But two recent studies of workplace success contradict the conventional wisdom about “hard skills.” Surprisingly, this research comes from the company most identified with the STEM-only approach: Google.There is no doubt that under the right circumstances, diversity of knowledge domains within a team of equally accomplished individuals can add much insight, innovation, and cross-fertilization between domains.

Sergey Brin and Larry Page, both brilliant computer scientists, founded their company on the conviction that only technologists can understand technology. Google originally set its hiring algorithms to sort for computer science students with top grades from elite science universities.

In 2013, Google decided to test its hiring hypothesis by crunching every bit and byte of hiring, firing, and promotion data accumulated since the company’s incorporation in 1998. Project Oxygen shocked everyone by concluding that, among the eight most important qualities of Google’s top employees, STEM expertise comes in dead last. The seven top characteristics of success at Google are all soft skills: being a good coach; communicating and listening well; possessing insights into others (including others different values and points of view); having empathy toward and being supportive of one’s colleagues; being a good critical thinker and problem solver; and being able to make connections across complex ideas.

Those traits sound more like what one gains as an English or theater major than as a programmer. Could it be that top Google employees were succeeding despite their technical training, not because of it? After bringing in anthropologists and ethnographers to dive even deeper into the data, the company enlarged its previous hiring practices to include humanities majors, artists, and even the MBAs that, initially, Brin and Page viewed with disdain.

But Davidson appears intent on buttressing an otherwise anodyne assertion by twisting the actual implications of the data. The conclusion she wants to arrive at is that the humanities are important (I concur) and they are equally valuable (which is not what the data indicates.)

STEM skills are vital to the world we live in today, but technology alone, as Steve Jobs famously insisted, is not enough. We desperately need the expertise of those who are educated to the human, cultural, and social as well as the computational.Without reading the book, there may be much nuanced insight that does not come across in this summary article.

No student should be prevented from majoring in an area they love based on a false idea of what they need to succeed. Broad learning skills are the key to long-term, satisfying, productive careers. What helps you thrive in a changing world isn’t rocket science. It may just well be social science, and, yes, even the humanities and the arts that contribute to making you not just workforce ready but world ready.

What comes across, though, is a set of fairly fundamental cognitive errors. It centers on this finding:

Project Oxygen shocked everyone by concluding that, among the eight most important qualities of Google’s top employees, STEM expertise comes in dead last. The seven top characteristics of success at Google are all soft skills: being a good coach; communicating and listening well; possessing insights into others (including others different values and points of view); having empathy toward and being supportive of one’s colleagues; being a good critical thinker and problem solver; and being able to make connections across complex ideas.I suspect there are actually four errors going on simultaneously: Category Error, Range Restriction error, Survivorship Bias error, and the Simpson's Paradox.

It seems that the Washington Post commenters are alert to the errors. It makes you wonder, stuffed to the gills with STEM talent as Google is, how they allowed such a set of mistakes to color their own internal research.

Category Error - The categories of "coders/technologists" is not the same as the category of "people with STEM degrees." This isn't the biggest error, but it is an important one because it almost de facto reduces the validity of the conclusions. In our vernacular, we are playing fast and loose with our terminology. There are plenty of coders/technologists who entered the field via a route different than a STEM degree (or even a degree at all) and there are plenty of STEM degree people who redirect into non-STEM fields of endeavor. Using a degree as an indication of interest and accomplishment in technology is only a very loose proxy.Some of the Washington Post comments which tackle these issues

Range Restriction - Freddie deBoer has a good description of range restriction, here. When you select on one attribute among many which might be contributive to outcome, the other attributes become more explanatory of outcomes than does the range restricted attribute selected. As someone on twitter put it, "Conditional on having top-1% technical skill, technical skill is not very predictive of job success."

Survivorship Bias - The conditionality referenced above is a form of survivorship bias. "The logical error of concentrating on the people or things that made it past some selection process and overlooking those that did not, typically because of their lack of visibility. This can lead to false conclusions in several different ways. It is a form of selection bias."

Simpson's Paradox - "A phenomenon in probability and statistics, in which a trend appears in several different groups of data but disappears or reverses when these groups are combined." The patterns and correlations you might see in a sub-population (STEM degrees from prestigious schools population) can vary or be the opposite of those that you see in an amalgamated population (all degrees from prestigious schools.)

If the study did not include control groups that weren't subject to the initial hiring criteria, it is likely the results are strongly skewed by cofactors related to the study population. Among teams that all start out with very strong STEM skills (based on Google hiring practices) OF COURSE the teams that excel are going to be the ones that differentiate themselves through some other skill set(s).There are all sorts of interesting issues in here of causal origins of innovation, technical competence, team effectiveness, group productivity, etc.

The difference in STEM abilities among a population that was selected specifically for their STEM abilities is going to be much smaller than the variation among the other skills they weren't selected for. It's a bit like saying professional football players who did well in their college classes tend to stand out. It's because every professional football player has tremendous physical skills, but only some of them also did very well in school.

[next]

"being a good coach; communicating and listening well; possessing insights into others (including others different values and points of view); having empathy toward and being supportive of one’s colleagues; being a good critical thinker and problem solver; and being able to make connections across complex ideas.as anyone who has spent (serious) time with either english and/or theater majors would tell you, this inferential leap is complete, total and utter nonsense...there is little-to-nothing about the way english and/or theater has been or is being taught that relates to 'good coaching' or 'empathy' in the slightest....i am the first person to argue that each and every one of these so-called 'soft skills' are desirable and important....but the assertion/argument that you are more likely to acquire/learn these skills in a humanities curriculum vs a stem curriculum is nonsense on stilts....let's be clear: any curriculum that explicitly cultivates critical thinking skills, collaborative projects and measurable/meaningful deliverables is good for developing these skills....but, as someone who both teaches and does interdisciplinary research in a world-class university, i beg parents and students alike to recognize these sorts of curricula are the exception, not the rule....ms davidson's 'analysis' is sadly and fatally flawed....her heart is surely in the right place; her facts are not

Those traits sound more like what one gains as an English or theater major than as a programmer."

[next]

Graduate education in the humanities rarely teaches critical thinking skills beyond textual analysis. More than anything else, graduate education in the humanities teaches students to compete with each other, while navigating the politics of their chosen field. Nor do critical thinking, collaboration, empathy, etc., flourish in the highly competitive world of tenure-track Ph.D.'s. The idea that these same Ph.D.'s will somehow transmit soft skills to their students is, more often than not, fatuous -- how can they teach what they've never learned?

[next]

You start by saying they only hired people with good grades in stem from top schools, and then finished by saying that STEM was ranked last on their list of important traits. As a statistician, I can tell you what your problem is. STEM was just a common factor all hires had, so of course it is last on the list. Saying people had STEM and insight into people means that they have mastered everything basically. It doesn't mean that an English major can succeed at Google.

[next]

After working in the tech field for almost 50 years (starting as a programmer, then manager of programmers, managing system programmers running data centers, more software development and management experience, ending in my own consulting business) it was obvious to me that my success was because of my people skills and "soft" skills. BUT, I could never have done the job if I had not had the innate technical abilities and earned respect of my subordinates. And while some of the soft skills came from education (not much team oriented projects in the 60's), most of it came from experience. And innate personality. The important thing is to recognize the innate intellect and potential and nurture it thru education as well as on-the-job mentoring and training.

[next]

Indeed. If Google hired a bunch of people with non-STEM degrees, and then evaluated them, what qualities do you think will come out to be most important?

[next]

The key to this article is that having the computer science degree is what got people in the door, having softer skills made them a better employee.

Like it or not our economy rewards those with skills that are numbers, systems, analytical and scientifically focused. Softer skills are indeed important but provide depth to a technical skillset, not a substitute for one. I tell my college-bound kids that their primary degree should be technically oriented but blending this with a minor or coursework in design, history, philosophy or so forth will round them out and also open their mind to approaching problems and issues in new and unique ways. Some chafe at this notion but we need citizens with a moral voice too, something math and computer science won't provide on it's own. We need people who can discuss whether some new science or technology should be done, not just can it be done.

They warrant investigation and research. Progress is not facilitated by erroneous interpretation (whether owing to innumeracy or ignorance or bias or ideology). In a STEM environment dominated by people with technical skills, it is unsurprising that those with corresponding skills in team management or communication might shine above and beyond their counterparts with just the equally superior technical skills.

But if, from that, you conclude that everyone can be equally successful in tech regardless of their degree and that is what you communicate to young adults, then you are misleading them.

Yes, there are complex issues which need high-achieving individuals with a broad array of capabilities to tackle them. But if you want to achieve tech industry levels of compensation, find a way to be motivated to not only pass, but excel in STEM fields. And anticipate that if you have a residual competency or avocation in people management or communication, that will bode well for your career success.

But don't tell kids who want to major in history that they can anticipate achieving tech industry levels of compensation with just a history degree. That is not where the probabilities lie.

Metaphors and individualism

From The empty brain by Robert Epstein.

Some parts of his essay I believe to be true but others parts include assertions about which I have doubts. I am not in a position to dispute Epstein. His domain mastery far exceeds mine.

What I do like, is his discussion of the overweening influence of our metaphors on how we interpret the world. In particular, he looks at the evolving metaphor for the mind. My summary:

The essay is rich in suggestive insights.

Some parts of his essay I believe to be true but others parts include assertions about which I have doubts. I am not in a position to dispute Epstein. His domain mastery far exceeds mine.

What I do like, is his discussion of the overweening influence of our metaphors on how we interpret the world. In particular, he looks at the evolving metaphor for the mind. My summary:

Spirit - life breathed into clay.He does a good job sketching out how the metaphorical representation both enhances and constrains our understanding. The map is not the terrain and the metaphor is not the mind.

Hydraulic model - the flow of humours.

Mechanical model - gears and springs.

Electrical or telegraphic model

Computer model

Information processing model

The essay is rich in suggestive insights.

As we navigate through the world, we are changed by a variety of experiences. Of special note are experiences of three types: (1) we observe what is happening around us (other people behaving, sounds of music, instructions directed at us, words on pages, images on screens); (2) we are exposed to the pairing of unimportant stimuli (such as sirens) with important stimuli (such as the appearance of police cars); (3) we are punished or rewarded for behaving in certain ways.I especially liked this observation.

We become more effective in our lives if we change in ways that are consistent with these experiences – if we can now recite a poem or sing a song, if we are able to follow the instructions we are given, if we respond to the unimportant stimuli more like we do to the important stimuli, if we refrain from behaving in ways that were punished, if we behave more frequently in ways that were rewarded.

Because neither ‘memory banks’ nor ‘representations’ of stimuli exist in the brain, and because all that is required for us to function in the world is for the brain to change in an orderly way as a result of our experiences, there is no reason to believe that any two of us are changed the same way by the same experience. If you and I attend the same concert, the changes that occur in my brain when I listen to Beethoven’s 5th will almost certainly be completely different from the changes that occur in your brain. Those changes, whatever they are, are built on the unique neural structure that already exists, each structure having developed over a lifetime of unique experiences.This tackles a core element in the deterministic worldview - the idea that all things are directly causative and that the brain can be shaped to yield predictable outcomes. The alternate view, and the one to which I subscribe, is that while there is a degree of deterministic mechanism and there are some base levels of commonality among all individuals, for practical intents, we are all unique. What we experience, how we experience it, and what significance we attach to the experience means that everyone is as profoundly different from one another as they are similar in some basic ways.

Tuesday, December 26, 2017

Transfer of Learning

From Low Transfer of Learning: The Glass Is Half Full by Bryan Caplan.

I love it when I find a whole new domain of knowledge that reveals to me research of which I was unaware but pertinent to my interests or needs. Wikipedia's description of the field of Transfer of Learning:

I frequently see strong arguments made for Transfer of Learning in areas where I cannot find any actual evidence to support the hoped for conclusion.

One common claim is that reading literary fiction disposes a person to a more empathetic worldview and makes a person more effective. It is taken as an article of faith in many quarters. And, as far as I can tell, there is no credible evidence to support the supposition.

There isn't much attempted empirical research in the first place. Of the little that there is, most of it is purpose driven, i.e. they are setting out to prove that the effect is real rather than dispassionately seeking empirical evidence one way or the other.

There are a handful of studies, deeply flawed in design, and with too small non-random subject samples, which have found evidence for a very small effect size. In other words, the evidence suggests that reading literature does make one more empathetic but that the effect size is very small.

But among all the studies, the more rigorous the study design, the more random the sample, the larger the subject population, the more likely the result is null. There is no evidence to support an empathy development effect from reading literary fiction.

The second common claim I see is that such-and-such a program develops critical thinking. As with the empathy hypothesis, the evidence for effective Transfer of Learning in terms of critical thinking is miniscule.

The final area where I commonly see this faith in Transfer of Learning is in childhood literary circles. The faith is that if children read books with multicultural casts of characters, they will be more tolerant; if they read books with characters that "look" like them, they will be more confident; if they read books with more emotional content, they will be more empathetic.

The basis for believing any of these things is empirically true is de minimis.

And certainly, since my university days, there seems to have always been the background belief among people whom I know that universities were not a developmental interlude to cultivate deep knowledge but, rather, an opportunity to "learn how to learn." Were that it were so.

There seems to be little evidence supporting the idea of Transfer of Learning. Arum and Roksa seem to indicate that there is also little transfer of knowledge. Quite an opportunity for transformational change.

I love it when I find a whole new domain of knowledge that reveals to me research of which I was unaware but pertinent to my interests or needs. Wikipedia's description of the field of Transfer of Learning:

Transfer of learning is the dependency of human conduct, learning, or performance on prior experience. The notion was originally introduced as transfer of practice by Edward Thorndike and Robert S. Woodworth. They explored how individuals would transfer learning in one context to another, similar context – or how "improvement in one mental function" could influence a related one. Their theory implied that transfer of learning depends on how similar the learning task and transfer tasks are, or where "identical elements are concerned in the influencing and influenced function", now known as the identical element theory.So there is the theory of Transfer of Learning. What is the evidence? From Caplan.

Teachers like to think that no matter how useless their lessons appear, they are "teaching their students how to think." Under the heading of "Transfer of Learning," educational psychologists have spent over a century looking for evidence that this sort of learning actually occurs.Read his post for his optimism.

The results are decidedly negative. Learning is highly specific. One decent summary:

[T]ransfer is especially important to learning theory and educational practice because very often the kinds of transfer hoped for do not occur. The classic investigation of this was conducted by the renowned educational psychologist E. L. Thorndike in the first decades of the 20th century. Thorndike examined the proposition that studies of Latin disciplined the mind, preparing people for better performance in other subject matters. Comparing the performance in other academic subjects of students who had taken Latin with those who had not, Thorndike (1923) found no advantage of Latin studies whatsoever. In other experiments, Thorndike and Woodworth (1901) sought, and generally failed to find, positive impact of one sort of learning on another...Thorndike's early and troubling findings have reemerged again and again in other investigations...

Most educational psychologists are dismayed by what they've discovered about Transfer of Learning. (Here's one eloquent example). After reviewing the National Assessment of Adult Literacy, though, I realized that researchers' moping is premature.

I frequently see strong arguments made for Transfer of Learning in areas where I cannot find any actual evidence to support the hoped for conclusion.

One common claim is that reading literary fiction disposes a person to a more empathetic worldview and makes a person more effective. It is taken as an article of faith in many quarters. And, as far as I can tell, there is no credible evidence to support the supposition.

There isn't much attempted empirical research in the first place. Of the little that there is, most of it is purpose driven, i.e. they are setting out to prove that the effect is real rather than dispassionately seeking empirical evidence one way or the other.

There are a handful of studies, deeply flawed in design, and with too small non-random subject samples, which have found evidence for a very small effect size. In other words, the evidence suggests that reading literature does make one more empathetic but that the effect size is very small.

But among all the studies, the more rigorous the study design, the more random the sample, the larger the subject population, the more likely the result is null. There is no evidence to support an empathy development effect from reading literary fiction.

The second common claim I see is that such-and-such a program develops critical thinking. As with the empathy hypothesis, the evidence for effective Transfer of Learning in terms of critical thinking is miniscule.

The final area where I commonly see this faith in Transfer of Learning is in childhood literary circles. The faith is that if children read books with multicultural casts of characters, they will be more tolerant; if they read books with characters that "look" like them, they will be more confident; if they read books with more emotional content, they will be more empathetic.

The basis for believing any of these things is empirically true is de minimis.

And certainly, since my university days, there seems to have always been the background belief among people whom I know that universities were not a developmental interlude to cultivate deep knowledge but, rather, an opportunity to "learn how to learn." Were that it were so.

There seems to be little evidence supporting the idea of Transfer of Learning. Arum and Roksa seem to indicate that there is also little transfer of knowledge. Quite an opportunity for transformational change.

But exceptions were made

From Shakespeare The World as Stage by Bill Bryson. Page 29.

A reminder of the brutal nature of justice in former times. 1600-1900 were a critical period of transition. When everyone was closer to the edge of starvation, death, and insurrection, the punishments for crimes were almost inconceivably brutal. It was only with the emergence of prosperity that civil societies began to feel confident enough to move away from barbarous justice.

A reminder of the brutal nature of justice in former times. 1600-1900 were a critical period of transition. When everyone was closer to the edge of starvation, death, and insurrection, the punishments for crimes were almost inconceivably brutal. It was only with the emergence of prosperity that civil societies began to feel confident enough to move away from barbarous justice.

In fact he got off comparatively lightly, for punishments could be truly severe. Many convicted felons still heard the chilling words: “You shall be led from hence to the place whence you came . . . and your body shall be opened, your heart and bowels plucked out, and your privy members cut off and thrown into the fire before your eyes.” Actually by Elizabeth’s time it had become most unusual for felons to be disemboweled while they were still alive enough to know it. But exceptions were made. In 1586 Elizabeth ordered that Anthony Babington, a wealthy young Catholic who had plotted her assassination, should be made an example of. Babington was hauled down from the scaffold while still conscious and made to watch as his abdomen was sliced open and the contents allowed to spill out. It was by this time an act of such horrifying cruelty that it disgusted even the bloodthirsty crowd.

The Zulu three traditional sources of military efficacy: manpower, mobilization, and tactics

From Carnage and Culture by Victor Davis Hanson. Page 313.

Much myth and romance surround the Zulu military, but we can dispense with the popular idea that its warriors fought so well because of enforced sexual celibacy or the use of stimulant drugs—or even that they learned their regimental system and terrifying tactics of envelopment from British or Dutch tradesmen. Zulu men had plenty of sexual outlets before marriage, carried mostly snuff on campaign, only occasionally smoked cannabis, drank a mild beer, and created their method of battle advance entirely from their own experience from decades of defeating tribal warriors. The general idea of military regimentation, perhaps even the knowledge of casting high-quality metal spearheads, may have been derived from observation of early European colonial armies, but the refined system of age-class regiments and attacking in the manner of the buffalo were entirely indigenous developments.

The undeniable Zulu preponderance of power derived from three traditional sources of military efficacy: manpower, mobilization, and tactics. All three were at odds with almost all native African methods of fighting. The conquest of Bantu tribes in southeast Africa under Shaka’s leadership meant that for most of the nineteenth century until the British conquest—during the subsequent reigns of Kings Dingane (1828–40), Mpande (1840–72), and Cetshwayo (1872–79)—the Zulus controlled a population ranging between 250,000 and 500,000 and could muster an army of some 40,000 to 50,000 in some thirty-five impis, many times larger than any force, black or white, that Africa might field.

Unlike most other tribal armies of the bush, the Zulus were no mere horde that fought as an ad hoc throng. They did not stage ritual fights in which customary protocols and missile warfare discouraged lethality. Rather, Zulu impis were reflections of fundamental social mores of the Zulu nation itself, which was a society designed in almost every facet for the continuous acquisition of booty and the need for individual subjects to taste killing firsthand. If the Aztec warrior sought a record of captive-taking to advance his standing, then a Zulu could find little status or the chance to create his own household until he had “washed his spear” in the blood of an enemy.

Credenza, belief, poison

From The Tricky Art of Co-Existing: How to Behave Decently No Matter What Life Throws Your Way by Sandi Toksvig. Via Anne Althouse.

File under: Things I did not know.

File under: Things I did not know.

I have a delightful friend who recently ordered a rather expensive sideboard for her home that she referred to as a credenza - which is of course the correct term. What she did not know is that the word comes from the Italian for "belief." In the sixteenth century it referred to the pre-tasting of food and drink by a servant to make sure his lord was not about to be poisoned. Eventually the word came to describe the piece of furniture that held the food before it was finally served. If pre-tasting is necessary in someone's home you may want to reconsider your friends.

Majora canamus

Christmas isn't quite complete without a good dose of Handel's Messiah. Sublime.

Let Us Sing of Greater Things by Rich Lowry provides a thumbnail sketch of the backstory.

Let Us Sing of Greater Things by Rich Lowry provides a thumbnail sketch of the backstory.

A native German who lived in London, G.F. Handel was extraordinarily prolific, composing roughly 40 operas and 30 oratorios. His towering status isn’t in question. Beethoven, born nearly a hundred years later, deemed him “the master of us all.”

Although the “Messiah” is invariably called “Handel’s Messiah,” it was a collaboration. The librettist Charles Jennens, a devout Christian, provided the composer with a “scriptural collection,” the Biblical quotations that make up the text.

Jennens wrote a friend that he hoped Handel “will lay out his whole genius and skill upon it, that the composition may excel all his former compositions, as the subject excels every other subject. The subject is Messiah.”

He needn’t have worried. Handel completed a draft score in three weeks in the summer of 1741. The legend says that while composing the famous “Hallelujah” chorus, he had a vision of “the great God himself.” There is no doubt that artist and subject matter came together in one of the most inspired episodes in the history of Western creativity.

[snip]

On the title page of his “Messiah” word book, Charles Jennens quoted Virgil, “majora canamus,” or let us sing of greater things.

Monday, December 25, 2017

She slept with an old sword beside her bed

From Shakespeare The World as Stage by Bill Bryson. Page 26.

Though it was an age of huge religious turmoil, and although many were martyred, on the whole the transition to a Protestant society proceeded reasonably smoothly, without civil war or wide-scale slaughter. In the forty-five years of Elizabeth’s reign, fewer than two hundred Catholics were executed. This compares with eight thousand Protestant Huguenots killed in Paris alone during the Saint Bartholomew’s Day massacre in 1572, and the unknown thousands who died elsewhere in France. That slaughter had a deeply traumatizing effect in England—Christopher Marlowe graphically depicted it in The Massacre at Paris and put slaughter scenes in two other plays—and left two generations of Protestant Britons at once jittery for their skins and ferociously patriotic.

Elizabeth was thirty years old and had been queen for just over five years at the time of William Shakespeare’s birth, and she would reign for thirty-nine more, though never easily. In Catholic eyes she was an outlaw and a bastard. She would be bitterly attacked by successive popes, who would first excommunicate her and then openly invite her assassination. Moreover for most of her reign a Catholic substitute was conspicuously standing by: her cousin Mary, Queen of Scots. Because of the dangers to Elizabeth’s life, every precaution was taken to preserve her. She was not permitted to be alone out of doors and was closely guarded within. She was urged to be wary of any presents of clothing designed to be worn against her “body bare” for fear that they might be deviously contaminated with plague. Even the chair in which she normally sat was suspected at one point of having been dusted with infectious agents. When it was rumored that an Italian poisoner had joined her court, she had all her Italian servants dismissed. Eventually, trusting no one completely, she slept with an old sword beside her bed.

Sunday, December 24, 2017

The legibility of Shakespeare.

From Shakespeare The World as Stage by Bill Bryson. Page 25.

We are lucky to know as much as we do. Shakespeare was born just at the time when records were first kept with some fidelity. Although all parishes in England had been ordered more than a quarter of a century earlier, in 1538, to maintain registers of births, deaths, and weddings, not all complied. (Many suspected that the state’s sudden interest in information gathering was a prelude to some unwelcome new tax.) Stratford didn’t begin keeping records until as late as 1558—in time to include Will, but not Anne Hathaway, his older-by-eight-years wife.Relates to James C. Scott's idea of legibility in Seeing Like a State. The state has an interest in measuring things to make them legible to the state, that legibility being needed for all sorts of reasons - including the laying of taxes.

Relative advantages

From Carnage and Culture by Victor Davis Hanson. Page 261.

Venice’s strength vis-à-vis the Turks lay not so much in geography, natural resources, religious zealotry, or a commitment to continual warring and raiding as in its system of capitalism, consensual government, and devotion to disinterested research. Only that way could skilled nautical engineers, pilots, and trained admirals trump enormous Ottoman advantages in territory, tribute, a cultural tradition of warrior nomadism, and sheer manpower. The sultan sought out European traders, ship designers, seamen, and imported firearms—even portrait painters—while almost no Turks found their services required in Europe.

Christmas 2017

From Illuminating the medieval Nativity by Amy Jeffs. The Nativity, the Annunciation to the Shepherds and the Adoration of the Magi. The paintings are from the Cotton MS Caligula A VII/1 manuscript.

The Nativity.

Click to enlarge.

The Annunciation to the Shepherds.

Click to enlarge.

The Adoration of the Magi.

Click to enlarge.

The Nativity.

Click to enlarge.

The Annunciation to the Shepherds.

Click to enlarge.

The Adoration of the Magi.

Click to enlarge.

Bring out the tall tales now that we told by the fire

From A Child's Christmas in Wales by Dylan Thomas

The silent one-clouded heavens drifted on to the sea. Now we were snow-blind travelers lost on the north hills, and vast dewlapped dogs, with flasks round their necks, ambled and shambled up to us, baying "Excelsior." We returned home through the poor streets where only a few children fumbled with bare red fingers in the wheel-rutted snow and cat-called after us, their voices fading away, as we trudged uphill, into the cries of the dock birds and the hooting of ships out in the whirling bay. And then, at tea the recovered Uncles would be jolly; and the ice cake loomed in the center of the table like a marble grave. Auntie Hannah laced her tea with rum, because it was only once a year.

Bring out the tall tales now that we told by the fire as the gaslight bubbled like a diver. Ghosts whooed like owls in the long nights when I dared not look over my shoulder; animals lurked in the cubbyhole under the stairs where the gas meter ticked. And I remember that we went singing carols once, when there wasn't the shaving of a moon to light the flying streets. At the end of a long road was a drive that led to a large house, and we stumbled up the darkness of the drive that night, each one of us afraid, each one holding a stone in his hand in case, and all of us too brave to say a word. The wind through the trees made noises as of old and unpleasant and maybe webfooted men wheezing in caves.

Saturday, December 23, 2017